The Hidden Economics of Legacy Systems: Why They Cost More Than You Think

Legacy Systems as Financial Liabilities, Not Technical Artifacts

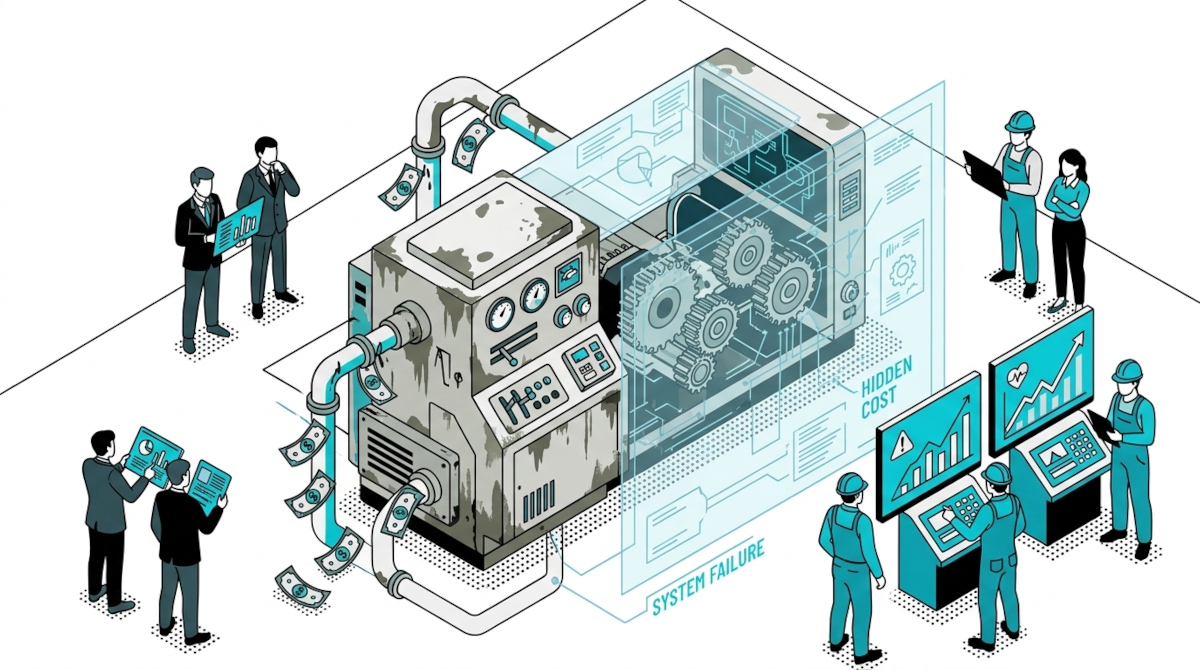

Most organizations still categorize legacy systems as a technical concern—something owned by IT, managed through maintenance budgets, and tolerated as part of operational reality. This framing is not just outdated—it is financially dangerous.

Legacy systems are not passive infrastructure. They actively consume capital, constrain growth, and introduce compounding financial risk across the enterprise. Yet, because these costs are distributed across departments—engineering, operations, compliance, and even revenue—they rarely appear as a single, measurable line item. The result is a systemic underestimation of their true economic impact.

At the executive level, this creates a blind spot. CIOs see rising maintenance overhead. CTOs experience slowed delivery cycles. CFOs notice budget creep without clear attribution. CROs face increasing audit complexity. Each function observes a symptom, but not the unified cause.

The reality is this: legacy systems behave more like financial liabilities than technical assets. They generate ongoing costs, reduce organizational agility, and expose the business to operational and regulatory risk. Unlike modern, scalable platforms that improve efficiency over time, legacy environments degrade—requiring more effort, more expertise, and more capital just to sustain baseline performance.

This misclassification has real consequences. When legacy is treated as a technical inconvenience, modernization is often delayed, underfunded, or narrowly scoped. But when reframed as a financial burden—one that compounds annually—it becomes a strategic priority.

The shift in perspective is critical. Because once leadership begins to evaluate legacy systems through an economic lens, a different question emerges:

Not “How do we maintain this system?”

—but—

“How much is this system actually costing us to keep?”

That question is where real transformation begins.

The Visible Costs: Maintenance, Infrastructure, and Licensing Overhead

Before examining the deeper financial impact of legacy systems, it’s important to acknowledge the costs that organizations already recognize—and often accept as unavoidable.

These are the visible, budgeted expenses: the ones that appear in IT forecasts, procurement plans, and annual reports. They form the baseline justification for maintaining legacy environments, yet they tell only part of the story.

At the forefront is maintenance. Legacy systems require continuous intervention to remain operational—bug fixes, minor enhancements, and compatibility adjustments. Unlike modern architectures that benefit from modular updates and automated pipelines, legacy environments often demand manual effort for even the simplest changes. Industry benchmarks suggest that maintaining legacy systems can cost 20–25% more than supporting modern platforms, largely due to inefficiencies in tooling and architecture.

Infrastructure is another significant component. Many legacy systems are tightly coupled to on-premise environments or aging mainframes, where scalability is limited and operational costs are high. Power consumption, hardware depreciation, and specialized hosting requirements all contribute to a cost structure that does not flex with business demand. Even when partially migrated to the cloud, these systems often carry inefficiencies that negate expected savings.

Licensing further compounds the issue. Proprietary technologies, outdated databases, and niche middleware frequently come with escalating licensing fees. In many cases, organizations continue to pay premium costs for systems that no longer deliver proportional value—simply because replacing them appears too risky or complex.

These costs are visible, predictable, and routinely approved. They are tracked, audited, and—perhaps most critically—normalized.

But this normalization creates a false sense of control.

Because while organizations focus on optimizing these surface-level expenses, a far more significant set of costs operates beneath them—untracked, unquantified, and exponentially more impactful.

The real financial burden of legacy systems does not lie in what you can see on a balance sheet.

It lies in everything you can’t.

Beneath the Surface: The Multi-Layered Hidden Cost Structure

If the visible costs of legacy systems were the full picture, modernization would be a straightforward financial decision. Budgets would be optimized, infrastructure would be upgraded, and organizations would move forward with clarity.

But that is not how legacy systems behave in practice.

The most significant costs are not the ones that appear in financial reports—they are the ones embedded in daily operations, silently accumulating across teams, workflows, and decisions. These hidden costs are fragmented, indirect, and often misattributed, making them far more dangerous than their visible counterparts.

Legacy systems introduce friction at every layer of the organization. Engineering teams slow down. Business initiatives take longer to execute. Risk exposure increases. Yet none of these impacts are typically labeled as “legacy cost.” Instead, they show up as delayed projects, inflated delivery budgets, audit challenges, or missed market opportunities.

What makes this structure particularly problematic is its compounding nature.

A delay in development is not just a productivity issue—it affects time-to-market.

A lack of system understanding is not just a technical gap—it drives rework and increases error rates.

A compliance workaround is not just a temporary fix—it raises long-term regulatory risk.

Individually, these effects may seem manageable. Collectively, they form a multi-layered cost structure that far exceeds the visible expenses of maintenance and infrastructure.

This is where most organizations lose financial visibility.

Because these costs are distributed, they are rarely aggregated. Because they are indirect, they are rarely challenged. And because they are normalized, they are rarely questioned.

To truly understand the economics of legacy systems, organizations must shift from tracking isolated expenses to analyzing systemic impact. That means identifying where time is lost, where knowledge is fragmented, where errors originate, and where risk accumulates.

Only then does the full financial weight of legacy systems become visible.

And only then does modernization become not just a technical initiative—but a financial imperative.

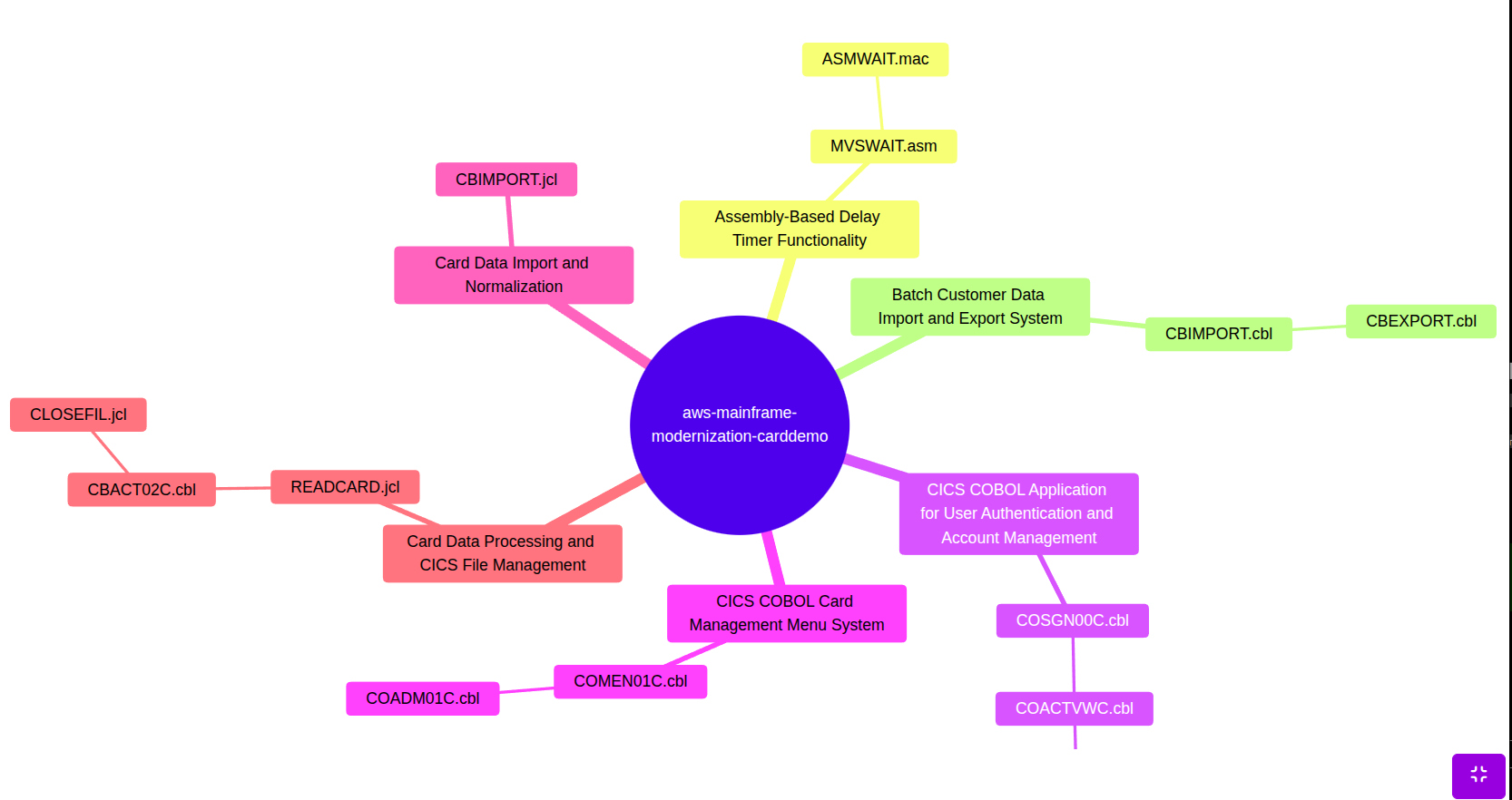

Productivity Drag: How Legacy Systems Erode Engineering Velocity

One of the most significant—and consistently underestimated—cost drivers in legacy environments is productivity loss. Unlike infrastructure or licensing, this cost does not appear on a balance sheet. Yet it directly impacts delivery timelines, resource utilization, and ultimately, revenue generation.

In modern development environments, velocity is driven by clarity. Engineers can quickly understand systems, make changes with confidence, and deploy features efficiently. Legacy systems operate in the opposite way.

Developers spend a disproportionate amount of time not building—but deciphering.

Poorly documented codebases, tightly coupled architectures, and outdated programming paradigms force engineers into a constant cycle of investigation. Before a single line of new code is written, hours—sometimes days—are spent trying to understand how existing logic behaves, where dependencies exist, and what unintended consequences a change might trigger.

Research indicates that developers working in legacy environments can lose up to 17 hours per week navigating these inefficiencies. That is nearly half of a standard workweek spent on non-productive effort.

The implications extend far beyond engineering.

Slower development cycles delay product releases.

Delayed releases impact competitive positioning.

And reduced throughput increases the cost of every feature delivered.

Over time, this creates a measurable financial drag:

- Higher cost per engineering hour

- Lower return on development investment

- Reduced capacity for innovation

What makes this particularly challenging is that organizations often attempt to solve the problem by adding more resources. But without addressing the root cause—lack of system understanding—this only scales inefficiency.

This is where a structural shift becomes critical.

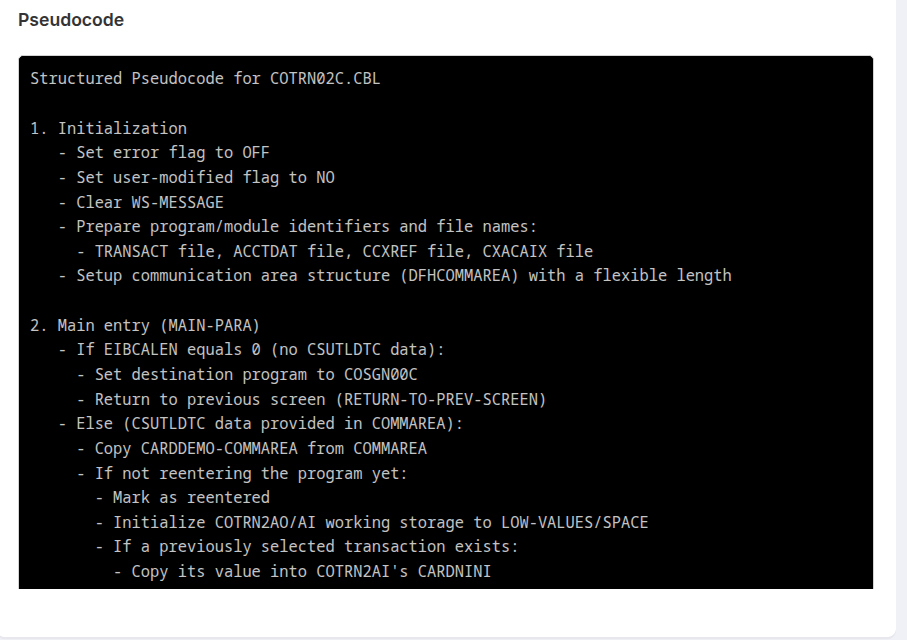

Instead of relying on tribal knowledge or manual code exploration, organizations can enable instant access to system intelligence. With AI-driven knowledge layers, developers can query systems in natural language, retrieve contextual answers, and drastically reduce the time spent searching for information.

The impact is immediate and compounding:

- Faster onboarding of new developers

- Reduced dependency on senior engineers

- Increased delivery velocity across teams

In financial terms, this is not just a productivity improvement—it is a direct reduction in operational cost per output.

Because in legacy environments, the true expense is not just maintaining the system.

It is the time lost trying to understand it.

Knowledge Fragility: The Rising Cost of Tribal Expertise

While productivity loss slows systems down, knowledge fragility puts them at risk.

Legacy environments are often sustained not by documentation or structured understanding, but by a shrinking group of individuals who carry critical system knowledge in their heads. Over time, this creates a dangerous dependency model—one where operational continuity relies on a few key people rather than resilient, accessible systems.

In many enterprises, particularly across banking, healthcare, and manufacturing, a significant portion of legacy expertise is concentrated among senior engineers approaching retirement. These individuals understand decades of business logic, undocumented edge cases, and system behaviors that are not captured anywhere else.

When that knowledge leaves, it does not transfer easily—it disappears.

The financial implications are immediate and severe:

- Increased reliance on expensive niche talent

- Longer onboarding cycles for new engineers

- Higher risk of operational disruption

- Escalating consulting and support costs

Organizations often attempt to mitigate this by documenting systems manually. But traditional documentation approaches are slow, incomplete, and quickly outdated—especially in complex, interdependent legacy architectures.

The result is a widening knowledge gap.

As systems evolve and experienced personnel exit, the cost of understanding the system increases exponentially. What was once a manageable dependency becomes a structural risk—impacting delivery timelines, incident response, and long-term maintainability.

This is where a more scalable approach becomes essential.

Instead of relying on manual knowledge transfer, organizations can systematically extract and structure institutional knowledge directly from the codebase itself. AI-driven analysis can generate system-level summaries, map business logic, and create continuously updated documentation that is accessible across both technical and non-technical teams.

The impact extends beyond risk mitigation:

- Reduced dependency on individual contributors

- Faster onboarding and cross-team collaboration

- Preservation of critical business logic

- Greater operational resilience

From a financial perspective, this transforms knowledge from a fragile, diminishing asset into a scalable, reusable resource.

Because in legacy environments, the real risk is not just outdated technology.

It is the loss of understanding—and the cost of trying to rebuild it.

Error, Rework, and Downtime: The Cost of Misunderstood Systems

In legacy environments, lack of system clarity does not just slow development—it introduces errors. And errors, in turn, create one of the most expensive and compounding cost layers: rework.

When developers operate without a clear understanding of underlying business logic, even well-intentioned changes can produce unintended consequences. A small update in one module may trigger failures in another. A misunderstood dependency can lead to incorrect implementations. And because these systems are often tightly coupled, identifying the root cause becomes a time-intensive process.

The result is a recurring cycle:

Build → Break → Investigate → Fix → Retest

Each loop consumes time, resources, and budget.

Rework is rarely tracked as a standalone cost, but its financial impact is substantial:

- Increased development hours per feature

- Extended testing and QA cycles

- Delayed releases and missed deadlines

- Higher overall project costs

Over time, this erodes confidence—not just within engineering teams, but across the business. Stakeholders begin to anticipate delays. Delivery timelines become padded. Innovation slows as teams prioritize stability over progress.

The problem intensifies when errors reach production.

Legacy systems are inherently more fragile due to their complexity and lack of visibility. When failures occur, diagnosing and resolving them can take significantly longer than in modern environments. This extended downtime has direct financial consequences—especially in regulated industries where system availability is critical.

In sectors like banking or healthcare, even brief outages can result in:

- Immediate revenue loss

- Regulatory scrutiny

- Customer dissatisfaction

- Long-term reputational damage

What makes this cost category particularly dangerous is its unpredictability. Unlike maintenance or licensing, error and downtime costs are variable—and often spike at the worst possible moments.

Addressing this requires more than better testing. It requires better understanding.

By generating clear, structured interpretations of system logic—such as pseudocode, flow-level explanations, and dependency mapping—organizations can reduce ambiguity before changes are made. Developers gain confidence in what the system is doing, why it behaves a certain way, and how modifications will impact it.

The outcome is not just fewer errors—it is a fundamental shift in delivery efficiency:

- Reduced rework cycles

- Faster root cause analysis

- Shorter recovery times during incidents

- More predictable project execution

From a financial standpoint, this transforms error handling from a reactive cost center into a controlled, minimized risk.

Because in legacy systems, mistakes are not just technical issues.

They are expensive, repeatable financial events.

Compliance, Security, and Regulatory Exposure in Legacy Environments

For organizations operating in regulated industries, legacy systems introduce a category of risk that extends beyond operational inefficiency—regulatory exposure.

Frameworks such as HIPAA in healthcare, Basel IV in banking, and NIST in federal environments are continuously evolving. They demand transparency, traceability, and demonstrable control over systems and data. Legacy architectures, by contrast, were not designed with these requirements in mind.

This creates a structural mismatch.

Legacy systems often lack:

- Clear audit trails

- Up-to-date documentation

- Visibility into data flows and dependencies

- Standardized security controls

As a result, compliance becomes reactive rather than proactive. Instead of building systems that inherently meet regulatory standards, organizations are forced to retrofit compliance—layering controls, documentation, and reporting mechanisms onto systems that were never designed to support them.

This approach is not only inefficient—it is expensive.

Compliance retrofitting drives:

- Increased audit preparation time

- Higher consulting and advisory costs

- Manual evidence collection across systems

- Greater likelihood of audit findings and penalties

More critically, it introduces ongoing risk.

Without a clear understanding of how data moves through legacy systems, organizations cannot confidently answer fundamental regulatory questions:

- Where is sensitive data stored?

- How is it processed?

- Who has access to it?

- What happens when it changes?

This lack of visibility elevates both security and compliance risk. Vulnerabilities remain hidden. Gaps go undetected. And when issues are discovered—often during audits—the cost of remediation is significantly higher than if they had been identified earlier.

A more sustainable approach requires shifting from reactive compliance to embedded understanding.

By analyzing legacy systems at a structural level—mapping data flows, extracting business logic, and generating continuous documentation—organizations can create a transparent foundation for compliance. Instead of scrambling to prepare for audits, they operate in a state of readiness.

The financial benefits are substantial:

- Reduced audit preparation costs

- Faster response to regulatory inquiries

- Lower risk of fines and penalties

- Improved security posture across systems

In this context, compliance is no longer just a legal requirement—it becomes a measurable cost driver tied directly to system visibility and understanding.

Because in legacy environments, the greatest regulatory risk is not non-compliance by intent.

It is non-compliance by obscurity.